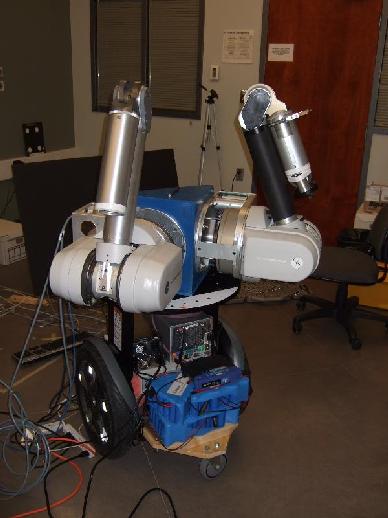

Two arm mobile manipulator picking up a box.

In Visual Servoing the motion of a robot is controlled based on video image information. A challenge is that cameras encode information in a 2D pixel coordinate system, while most robots have rotational joints and motors. The conventional, position-based approach is to calibrate both the camera and robot in a common Cartesian base coordinate frame. However such calibration is cumbersome and error prone. Our approach is to instead do away with the base frame, and directly estimate the coordinate transform from the cameras (usually one or two) to the robot motor space. The key insight is that when the camera observes the motion of the robot one gains partial information about this coordinate transform. This partial information can be gathered over time into either a local linear model, a Visual-Motor Jacobian, or a global non-linear model, a Visual-Motor Function. For more information see the

Visual Servoing page

In Visual Servoing the motion of a robot is controlled based on video image information. A challenge is that cameras encode information in a 2D pixel coordinate system, while most robots have rotational joints and motors. The conventional, position-based approach is to calibrate both the camera and robot in a common Cartesian base coordinate frame. However such calibration is cumbersome and error prone. Our approach is to instead do away with the base frame, and directly estimate the coordinate transform from the cameras (usually one or two) to the robot motor space. The key insight is that when the camera observes the motion of the robot one gains partial information about this coordinate transform. This partial information can be gathered over time into either a local linear model, a Visual-Motor Jacobian, or a global non-linear model, a Visual-Motor Function. For more information see the

Visual Servoing page

In Tele-Robotics a human operator controls the motions of a distant robot. One challenge is to make the visual display of the remote scene and motions as life-like as possible. Current systems just show video camera images from the robot scene. However this has several problems. The biggest problem is usually that the round trip delay from operator command to that s/he sees the effects of his actions makes it difficult to perform precision manipulations. It has been shown that delays of just tenths of a second degrades tele-manipulation performance. Other challenges are the limited field of view, and (more rarely now days) limited bandwidth. Our solution is to use computer vision techniques to capture in real time a model of remote scene geometry and appearance. This model is incrementally transmitted to the operator site. the model allows immediate rendering of any viewpoint using image-based rendering, instead of waiting for the delayed real video. More information is available on the

Predictive Display page

In Tele-Robotics a human operator controls the motions of a distant robot. One challenge is to make the visual display of the remote scene and motions as life-like as possible. Current systems just show video camera images from the robot scene. However this has several problems. The biggest problem is usually that the round trip delay from operator command to that s/he sees the effects of his actions makes it difficult to perform precision manipulations. It has been shown that delays of just tenths of a second degrades tele-manipulation performance. Other challenges are the limited field of view, and (more rarely now days) limited bandwidth. Our solution is to use computer vision techniques to capture in real time a model of remote scene geometry and appearance. This model is incrementally transmitted to the operator site. the model allows immediate rendering of any viewpoint using image-based rendering, instead of waiting for the delayed real video. More information is available on the

Predictive Display page

A long term goal for researchers is to make a robot with human like sensing and manipulation capabilities. Until now most research focused on components in isolation, e.g. arms, mobile platforms, vision systems. However recently research platforms integrating two arms, mobility and sensing have been built (e.g. NASA's Robonaut) and both the Canadian Space Agency and NASA envision mobile manipulation used for future missions in planetary exploration and on-orbit repair. We have projects with the Canadian Space Agency and three space industries to develop vision-based tele-robotics systems by combining our visual servoing and predictive display research. See more information on our

Space Telerobotics Project

A long term goal for researchers is to make a robot with human like sensing and manipulation capabilities. Until now most research focused on components in isolation, e.g. arms, mobile platforms, vision systems. However recently research platforms integrating two arms, mobility and sensing have been built (e.g. NASA's Robonaut) and both the Canadian Space Agency and NASA envision mobile manipulation used for future missions in planetary exploration and on-orbit repair. We have projects with the Canadian Space Agency and three space industries to develop vision-based tele-robotics systems by combining our visual servoing and predictive display research. See more information on our

Space Telerobotics Project

Video: Segway and WAM moving and balancing Video: Segway and WAM picking up a cup

The WAM arm is a wire-drive, very lightweight arm. The brand new version we have has all the motor controllers and amps integrated next to the motors. By getting rid of the conventional amplifier cabinet (aka 12CuFt refrigerator) the whole arm becomes very mobile. Here we have mounted it on a Segway mobile robot. The setup is statically unbalanced, but dynamically balances itself by solving an inverted pendulum problem.

Two arm mobile manipulator picking up a box.

We have integrated two WAM arms on a Segway RMP mobile base.

For computing we added two quad core PC's build from low power

components, a wireless router and a battery power system.

The mobile manipulator can be tele-operated over the wireless

coinnection from a laptop. Our research is to develop and

test vision-based semi-autonomous routines (aka supervisory control/

tele-assistance) with this setup.

We have integrated two WAM arms on a Segway RMP mobile base.

For computing we added two quad core PC's build from low power

components, a wireless router and a battery power system.

The mobile manipulator can be tele-operated over the wireless

coinnection from a laptop. Our research is to develop and

test vision-based semi-autonomous routines (aka supervisory control/

tele-assistance) with this setup.